Designing Trust Into AI Products

The most interesting part of building AI products has not been model quality by itself. It has been the question that comes right after the first good demo: why should anyone trust this system enough to use it in a real workflow?

That question changes the product. It moves the conversation away from raw capability and into evidence, control, and accountability. Once that happens, you stop thinking about trust as a policy document or a future compliance task. It becomes interface work.

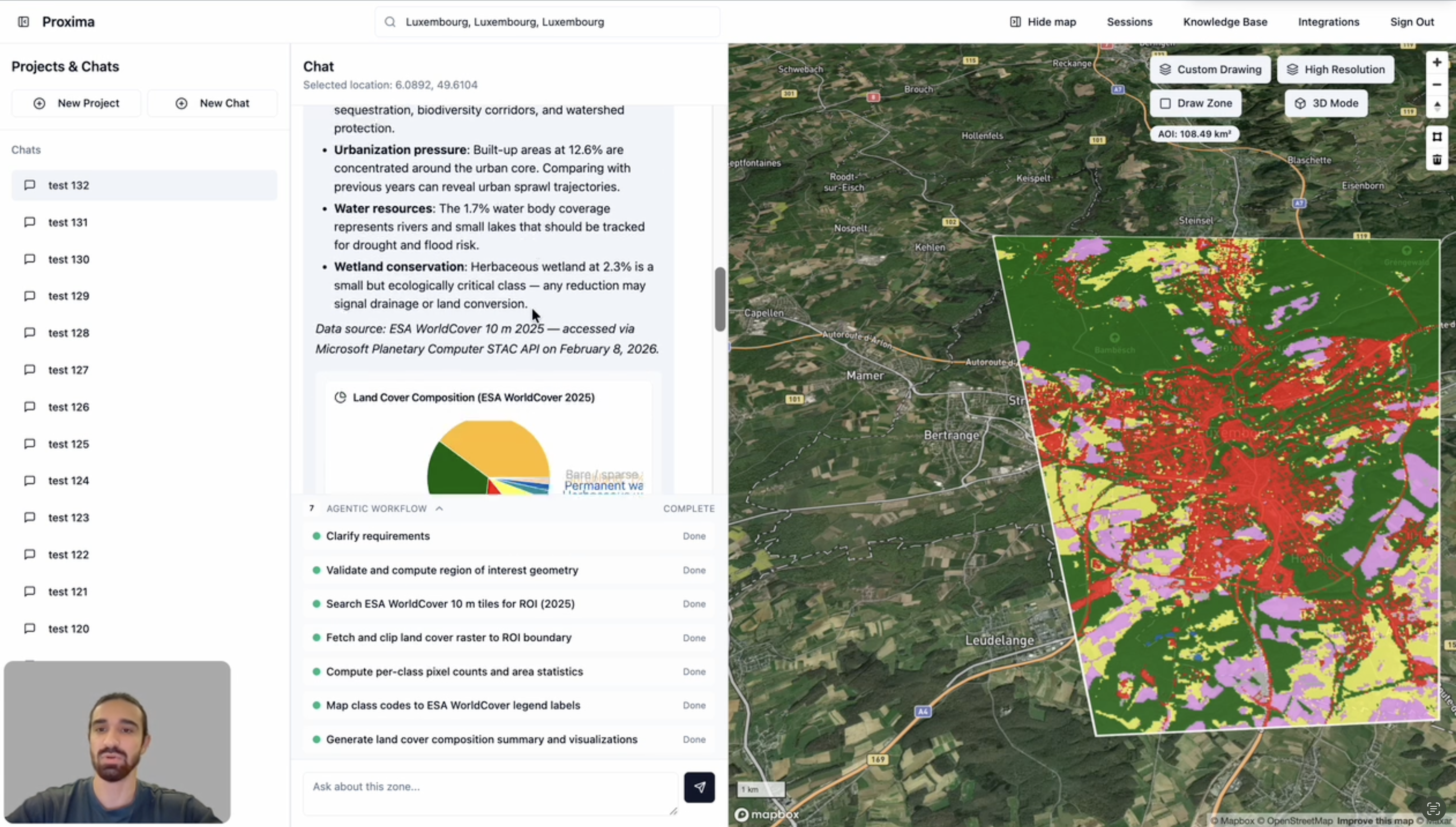

While building Proxima, that question showed up quickly. The product was a multi-agent GIS platform that analyzed imagery and spatial data, orchestrated specialist agents through LangGraph and LangChain, and generated map layers, charts, and other visual outputs people could use in real decisions. The more capable it became, the less acceptable opaque output became.

Capability Is Only the Entry Point

Early AI demos create a dangerous kind of optimism. The system can produce a convincing answer, summarize fast, and make the product feel far more capable than the previous version. But capability without context has a short shelf life.

As soon as users depend on an answer, they want to know where it came from, what assumptions shaped it, and how much confidence they should place in it. If the product cannot answer those questions, people either stop trusting it or start trusting it for the wrong reasons.

That is the point where AI product design gets serious.

Multi-Agent Systems Need Visible Work

In a multi-agent product, trust problems multiply if orchestration stays invisible. Users do not need every internal implementation detail, but they do need to know what kind of work is happening, what step is still running, and where the evidence is coming from.

In Proxima, a useful answer might depend on geometry validation, imagery selection, raster analysis, evidence collection, and synthesis before the final result appears. If all of that collapses into one polished response, the interface asks for too much faith.

Citations Are Product Features

I used to think of citations mostly as supporting detail. In practice, they change how users behave. When a product shows the sources behind an answer, users stop treating the output as a magic statement and start treating it as something they can inspect.

That changes the relationship between the user and the system. The product becomes less of an oracle and more of a working partner. It says, in effect, this is the answer I produced, and this is the trail you can use to verify it.

That shift matters because it reduces both blind trust and blanket skepticism. Good citations create a middle ground where users can move faster without losing judgment.

Visual Outputs Need Provenance Too

A polished chart or pixel-perfect map layer can feel more authoritative than text, even when the supporting reasoning is thin. That makes visual output more dangerous, not safer, when provenance is missing.

I have become much more careful about tying visuals to source dates, citations, workflow steps, and confidence cues. The better the output looks, the more disciplined the product has to be about showing how it was produced.

Confidence Should Clarify, Not Perform

Confidence indicators are useful, but only when they help people make a better decision. A fake sense of precision is worse than no confidence signal at all.

If the product says ninety three percent confidence, most users will naturally ask what that actually means. Does it mean the answer is factually correct? Does it mean the retrieval quality was high? Does it mean similar patterns existed in prior data? If the system cannot explain the signal, the number quickly becomes theater.

I have found it more useful to think of confidence as a framing tool. It should help the user decide whether to move forward immediately, inspect further, or verify independently. The output should change behavior, not just decorate the response.

Audit Logs Are Part of the Experience

One thing I have become much more opinionated about is auditability. Teams often think of audit logs as infrastructure work that exists somewhere behind the product. For AI systems, that is not enough.

When people rely on generated outputs in operational workflows, they need to know what happened. What input triggered the result? Which sources were used? What changed between one output and the next? Who had access to the workspace and the result set?

Those questions are not only for administrators. They affect support, debugging, customer trust, and internal confidence. A product that can explain its own history is easier to operate and much easier to defend.

Access Control Is Product Design

Access control often gets postponed because it feels like platform work. But in multi-tenant AI products, permissions shape the product as much as the interface does.

Who can run a workflow? Who can see the source material? Who can export a result? Who can inspect logs? Those decisions change how safe the product feels and how confidently a team can adopt it.

The mistake is treating those questions as optional until later. Once the product starts growing, late access design becomes expensive and messy. Early access design creates better boundaries, cleaner mental models, and fewer emergency fixes.

The Best Trust Signals Are Often Quiet

Trust does not always come from a visible badge or a large block of explanatory text. Sometimes it comes from smaller decisions:

- The product makes it easy to inspect the origin of an answer.

- The result updates clearly instead of changing silently.

- The user can see when a step is still in progress.

- The system shows where uncertainty exists instead of pretending it does not.

Those details rarely headline a launch announcement, but they have a large effect on whether the system feels credible after the novelty is gone.

The Question I Keep Returning To

Whenever I work on AI features now, I keep coming back to a simple question: what would help a thoughtful user trust this output for the right reason?

That question is useful because it pushes past surface polish. It forces clearer decisions around citations, confidence, access, and observability. It also keeps the product honest. If the answer is mostly branding or tone, the product probably is not ready yet.

AI products become far more valuable when they do not just generate answers. They help people understand when those answers deserve confidence, when they need review, and how to inspect the path that produced them.

That is where trust starts to feel real.